Be sure to test out the XPath selectors in the JavaScript Console within Chrome Developer Tools - e. extract () yield item ```` We are iterating through the ` questions ` and assigning the ` title ` and ` url ` values from the scraped data. xpath ( ) for question in questions : item = StackItem () item = question.

Mongodb robomongo tutorial update#

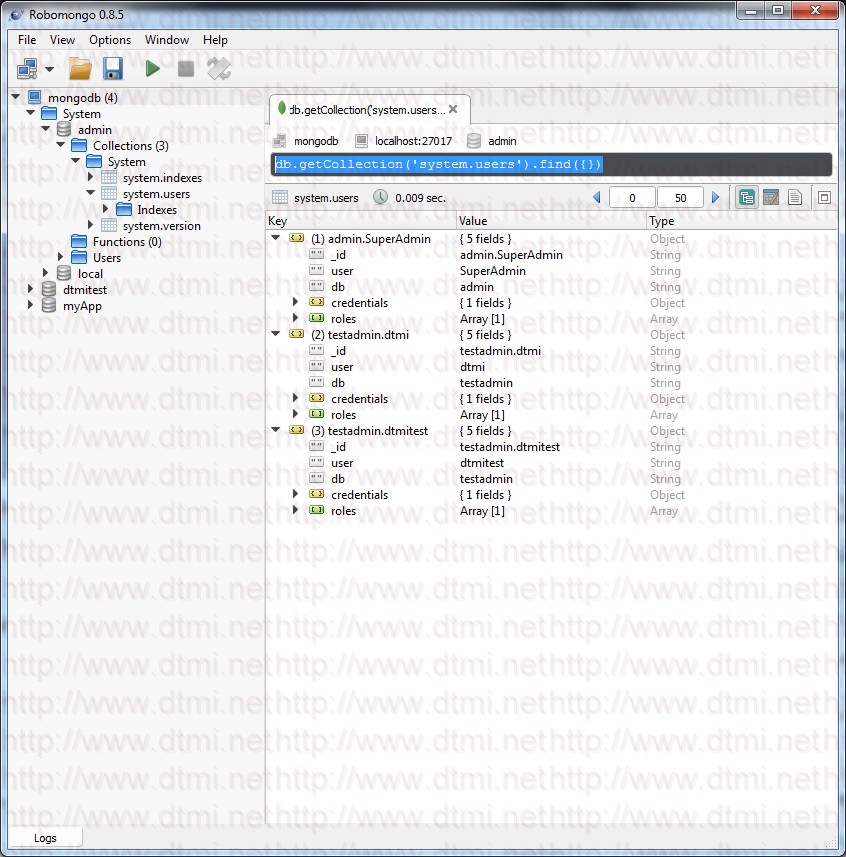

Now let’s update the stack_spider.py script:įrom scrapy import Spider from lector import Selector from ems import StackItem class StackSpider ( Spider ): name = "stack" allowed_domains = start_urls = def parse ( self, response ): questions = Selector ( response ). In most cases, the output is just a helpful aside, which generally points you in the right direction for finding the working XPath. Notice how we are not using the actual XPath output from Chrome Developer Tools. Test this XPath out in the JavaScript Console. Any ideas? It’s simple: What does this mean? Essentially, this XPath states: Grab all elements that are children of a that has a class of summary. I am having MongoDB and Robomongo in my running system, I am using Robomongo as client, I have installed MongoDB on different system which I am treating as server, I want to connect Robomongo of my system (as client) to MongoDB on other system (server). So we need to alter the XPath to grab all questions. Now grab the XPath for the, and then test it out in the JavaScript Console:Īs you can tell, it just selects that one question. Right click on the first question and select “Inspect Element”: Let’s navigate to the Stack Overflow site in Chrome and find the XPath selectors. Simply inspect a specific HTML element, copy the XPath, and then tweak (as needed):ĭeveloper Tools also gives you the ability to test XPath selectors in the JavaScript Console by using $x - i.e., $x("//img"):Īgain, we basically tell Scrapy where to start looking for information based on a defined XPath. It provides a Graphical User Interface (GUI) to interact with. You can easily find a specific Xpath using Chrome’s Developer Tools. Robo 3T, formerly known as Robomongo is a popular resource for MongoDB hosting deployments. As stated in Scrapy’s documentation, “XPath is a language for selecting nodes in XML documents, which can also be used with HTML.” In other words, we can select certain parts of the HTML data based on a given XPath.

Next, Scrapy uses XPath selectors to extract data from a website. All subsequent URLs will start from the data that the spider downloads from the URLS in start_urls. start_urls is a list of URLs for the spider to start crawling from.allowed_domains contains the base-URLs for the allowed domains for the spider to crawl.The first few variables are self-explanatory ( docs): From scrapy import Spider class StackSpider ( Spider ): name = "stack" allowed_domains = start_urls =